Entropy | Definition, Equation, formula & Unit

Table of Contents

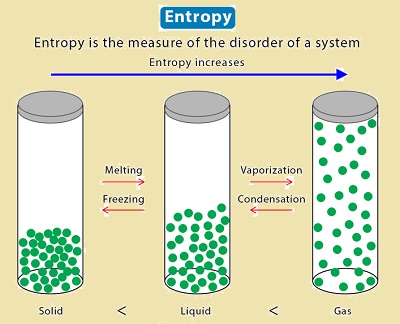

One of the key ideas that students studying physics and chemistry need to grasp with clarity is entropy. More importantly, entropy has multiple definitions and can be used in a variety of contexts, including the thermodynamic stage, cosmology, and even economics. Entropy is a concept that essentially refers to the universe’s propensity for disorder or the spontaneous changes that take place in everyday phenomena.

Entropy is frequently described as a measurable physical property that is most frequently connected with uncertainty, in addition to being just a scientific concept. However, the phrase is also used in a variety of disciplines, including information theory, statistical physics, and classical thermodynamics. In addition to its uses in physics and chemistry, it also has applications in sociology, weather science, and the study of climate change, as well as in the quantification of information transmission in telecommunication.

What Is Entropy?

Entropy is typically referred to as a measurement of a system’s randomness or disorder. In the year 1850, a German physicist by the name of Rudolf Clausius first proposed this idea. There are numerous definitions for this concept available in addition to the general definition. The thermodynamic definition and the statistical definition of entropy are the two definitions we’ll examine on this page. The microscopic specifics of a system are not taken into account from a thermodynamics perspective on entropys. The behavior of a system is instead described by entropy in terms of thermodynamic properties like temperature, pressure, entropy, and heat capacity. This thermodynamic explanation took the systems’ equilibrium state into account.

Additionally, the later-developed statistical definition concentrated on the thermodynamic properties, which were described in terms of the statistics of a system’s molecular motions. A measurement of molecular disorder is entropy.

Other popular interpretations of entropys are as follows:

- By using the density matrix, Von Neumann expanded the idea of entropy to the quantum domain when discussing quantum statistical mechanics.

- When discussing the information theory, it is a way to gauge how effectively a system transmits signals or how much information is lost during transmission.

- It is a term used to describe the increasing complexity of dynamical systems. Additionally, it measures the typical information flow per unit of time.

- According to sociology, entropy is the social deterioration or organic decay of a social system’s structure (such as law, organization, and convention).

- It is defined in cosmology as the hypothetical propensity of the universe to reach a state of maximum homogeneity. It specifies that the substance must have a constant temperature.

In any case, the term “entropy” is now frequently used in fields of science that are very unrelated to either physics or mathematics, and we must conclude that it has lost its strict quantitative nature.

Properties of Entropy

- It is a thermodynamic function.

- Entropy’s a state function. It depends on the state of the system and not the path that is followed.

- It is represented by S, but in the standard state, it is represented by S°.

- Entropy’s SI unit is J/Kmol.

- Entropy’s CGS unit is cal/Kmol.

- Entropy’s scales with a system’s size or extent because it is an extensive property.

Note: An isolated system will exhibit greater disorder; as a result, entropy also rises. If reactants split into more products during chemical reactions, entropy also rises. In comparison to a system at a lower temperature, a system at a higher temperature exhibits more randomness. It is evident from these examples that entropys rises as regularity decreases.

Entropy’s order: gas>liquid>solids

Entropy Change and Calculations

The amount of heat emitted or absorbed isothermally and irreversibly divided by the absolute temperature is the definition of a process during entropys change. The following is the entropy formula:

∆S = qrev,iso/T

Randomness will be greatest at a lower temperature if we apply the same amount of heat at a higher temperature and a lower temperature. As a result, it implies that entropy and temperature are inversely related.

Total entropy change, ∆Stotal =∆Ssurroundings+∆Ssystem

Total entropy change is equal to the sum of the entropy change of the system and surroundings.

If the system loses an amount of heat q at a temperature T1, which is received by surroundings at a temperature T2.

So, ∆Stotal can be calculated

And ∆Ssystem=-q/T1

∆Ssurrounding =q/T2

∆Stotal=-q/T1+q/T2

● If ∆Stotal is positive, the process is spontaneous.

If ∆Stotal is negative, the process is non-spontaneous.

● If ∆Stotal is zero, the process is at equilibrium.

Points to Remember

Thermodynamically, a spontaneous process cannot be reversed. After some time, the irreversible process will reach equilibrium.

Entropy change during the isothermal reversible expansion of an ideal gas

∆S = qrev,iso/T

According to the first law of thermodynamics,

∆U=q+w

For the isothermal expansion of an ideal gas, ∆U = 0

qrev = -wrev = nRTln(V2/V1)

Therefore,

∆S = nRln(V2/V1)

Entropy Change During Reversible Adiabatic Expansion

Heat exchange will be zero for an adiabatic process (q = 0), so reversible adiabatic expansion is occurring at a constant entropy (isentropic).

q = 0

Therefore,

∆S = 0

The irreversible adiabatic expansion is not isentropic, even though the reversible adiabatic expansion is.

∆S is not equal to zero.

Entropy Changes During Phase Transition

Entropy of Fusion

The Entropy is increased when a solid turns into a liquid. Phase changes result in an increase in molecular freedom of movement, which increases entropys. Enthalpy of fusion divided by melting point (fusion temperature) is the formula for entropy of fusion.

∆fusS=∆fusH / Tf

When the associated change in the Gibbs free energy is negative, a natural process, such as a phase transition (for instance, fusion), will take place.

Most of the time, ∆fusS is positive

Exception

Helium-3 has a negative entropy of fusion at temperatures below 0.3 K. Helium-4 also has a very slightly negative entropys of fusion below 0.8 K.

Entropy of Vaporisation

As a liquid transforms into a vapour, there is an increase in entropys, which is known as the entropy of vaporization. This is brought on by an increase in molecular motion, which leads to motion that is random. The enthalpy of vaporization divided by the boiling point yields the entropys of vaporization. It can be illustrated by,

∆vapS=∆vapH / Tb

Standard Entropy of Formation of a Compound

It is the change in entropy that occurs when one mole of a compound in the standard state is created from its component elements.

Spontaneity

Exothermic reactions are spontaneous because ∆Ssurrounding is positive, which makes ∆Stotal positive.

Endothermic reactions are spontaneous because ∆Ssystem is positive and ∆Ssurroundings is negative, but overall ∆Stotal is positive.

And For predicting spontaneity, free energy change criteria are preferable to entropys change criteria because the former only calls for a change in the system’s free energy, while the latter also calls for a change in the environment’s entropys.

Negentropy

It is entropy’s opposite. It denotes a greater sense of order. Order here refers to organization, structure, and purpose. It is the antithesis of chaos or randomness.